Activities

Discovery Research, User Interviews, Survey, Workshops, Mixed-methods

Discovery Research, User Interviews, Survey, Workshops, Mixed-methods

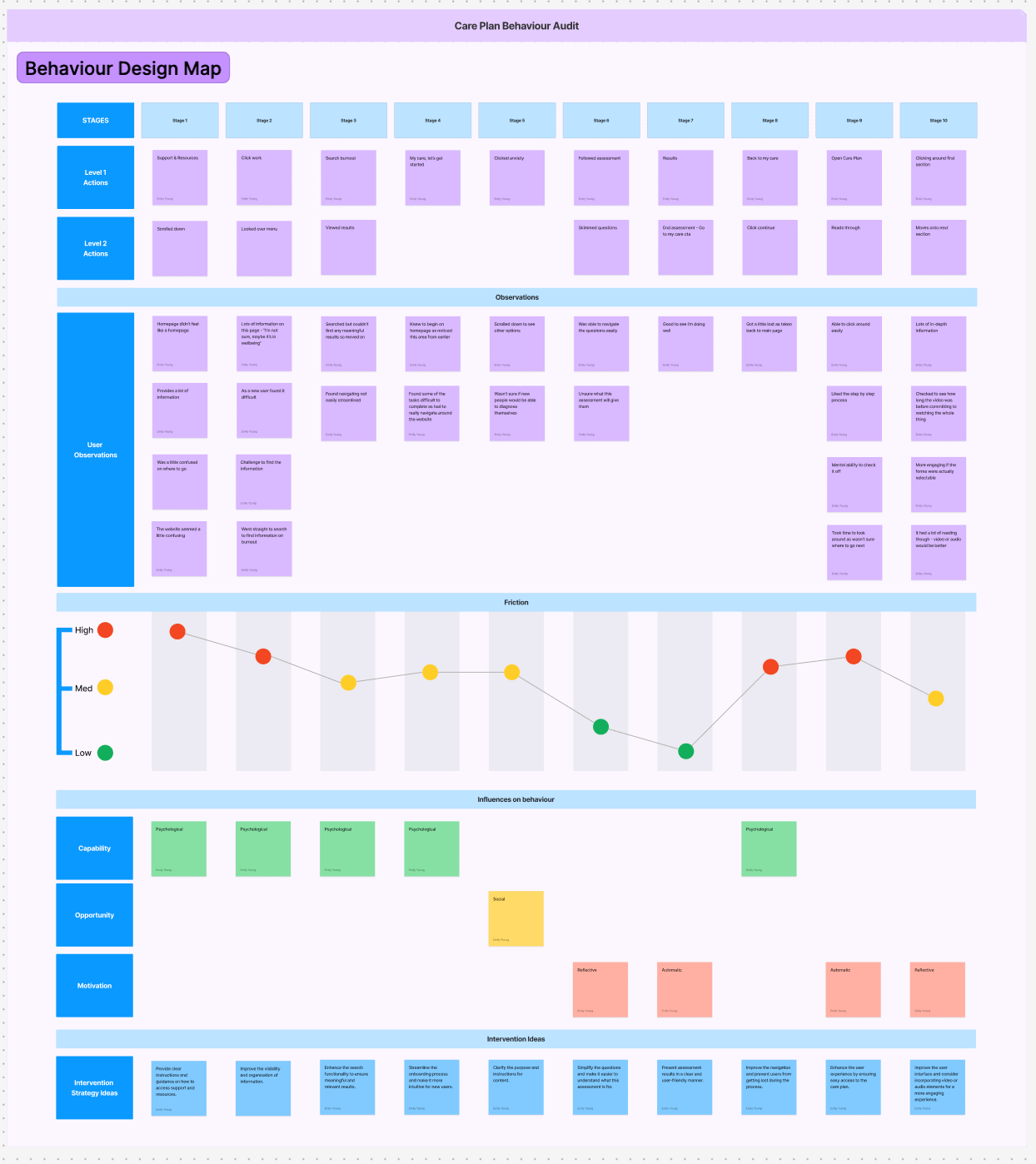

We identified that drop-off was less about “lack of interest” and more about uncertainty, overload, and low perceived value in the early flow. We translated qualitative + behavioural-science diagnosis into a focused set of product bets: clearer orientation, better search/findability, and lightweight “investment loops” (tracking, quick activities) to drive repeat use.

My role: Lead UX Researcher and strategic partner. Led the problem framing, created a mixed evidence base (analytics + survey + interviews), and operationalised findings into a COM-B/TDF diagnosis and intervention directions.

Team / collaborators: Cross-functional partnership with Product, Design, and Clinical stakeholders.

Problem space: Engagement was collapsing at the exact moment users were asked to choose a “care issue” and commit to a Mental Health Care Plan (Such as depression and anxiety). Nearly half of users started onboarding but dropped off before selecting a care issue, and only 10% completed onboarding and opened a care plan.

Why it mattered now: The business lacked confident evidence on what Care Plans should contain, how structured they should be, and what topics were worth prioritising. Without this, roadmap prioritisation and content investment were effectively guesswork, which put ROI/VOI at risk.

Research goals:

Constraints:

Method selection:

Participant strategy:

Lead-level “multiplier” move:

Stakeholder alignment under ambiguity:

Stakeholders explicitly called out that the team had “numbers but no context” and needed to avoid guessing (for example, “people only want therapy” versus “care plans lack interactivity”). The research reframed the conversation from opinions to testable behavioural barriers and evidence-backed product levers.

The pivot:

While “interactive tools” were an early hypothesis, the evidence broadened the problem: users were getting overwhelmed, struggling to find the right entry point, and not seeing a clear path after assessments.

Synthesis without a sticky-note photo dump:

I created an interview theme table and translated a large barrier set into COM-B/TDF domains, then connected each to intervention functions. That is the bridge from insight to roadmap.

Evidence: 23.1% are not sure what care issue to select, and 47% drop before selecting one.

Recommendation direction: reduce cognitive load and uncertainty with guided selection, plain-language framing, and better orientation to what a care issue is and what happens next.

Evidence: 81% present with more than one issue; 56% have 2–3 issues.

Recommendation direction: support multi-topic pathways, allow “I’m not sure” entry points, and enable personalisation that does not require self-diagnosis.

Evidence: interview themes emphasise progression/tracking and short activities that fit life; workshop ideas repeatedly call for goals, check-ins, and visible progress without guilt.

Recommendation direction: introduce lightweight “investment loops” (trackers, quick wins, micro-commitments) and clearer progress/history views.

Evidence: interviews stress combining therapy and resources, and stakeholder notes highlight the need to connect the front end to providers so providers can reinforce Care Plan usage.

Recommendation direction: provider-facing visibility into care plan progress, and user-facing guidance that explains how self-guided work supports therapy.

Product/roadmap influence:

Reflections:

The organisation had funnel metrics but lacked explanatory insight and an operationalised research-to-roadmap mechanism. This project increased research maturity by creating a repeatable behavioural diagnosis approach and aligning qualitative evidence with measurable drop-off points.